Visual similarity search

Query your hospital's archive by the image itself — not by patient name, study date, or report keyword. The encoder turns each study into a high-dimensional embedding; nearest-neighbor retrieval surfaces visually analogous prior cases in milliseconds, each linked to its confirmed clinical outcome from the EHR.

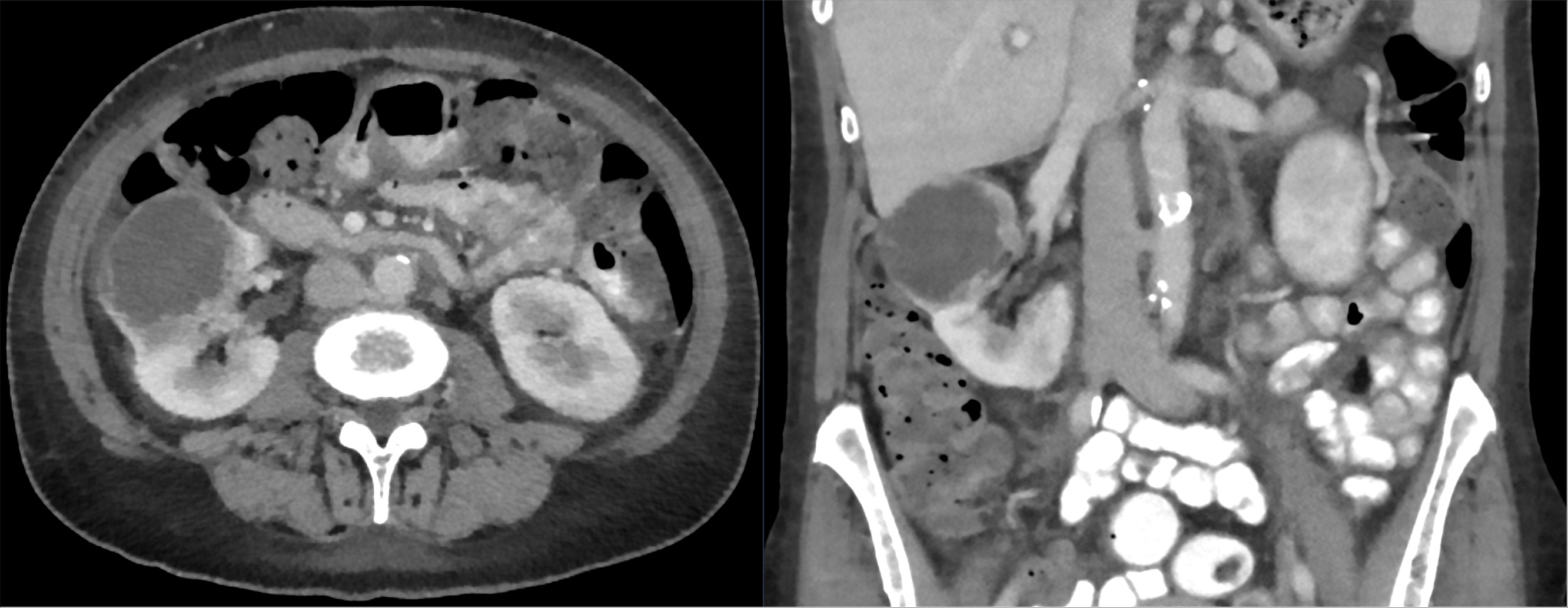

You're staring at an indeterminate 4 cm renal mass with central scar. Smart Atlas returns 12 visually similar prior cases from the last 5 years — 7 oncocytomas, 3 chromophobe RCCs, 2 clear cell RCCs — each with its surgical pathology already linked.

Modalities: CT · X-ray · MRI · sub-second retrieval